2026/04/26 Microsoft Cloud Solutions 8 visit(s) 3 min to read

Ctelecoms

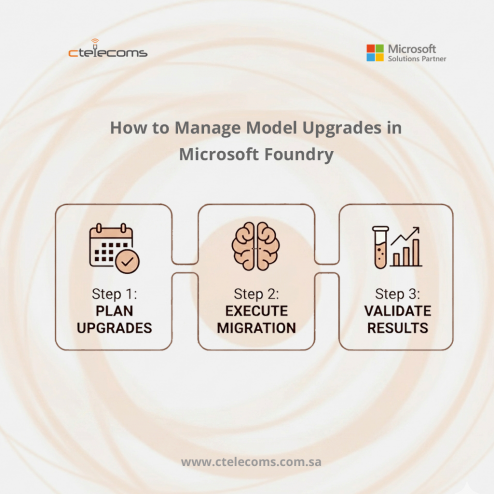

Staying current with AI technology is no longer just about clicking "update." In the world of Microsoft Foundry, upgrading your AI models is a continuous process that requires a solid plan. Because models evolve and older versions eventually retire, waiting until the last minute can lead to broken applications and service downtime.

At Ctelecoms, we help businesses in Saudi Arabia navigate these transitions. Here is a practical, enterprise-grade strategy to handle model upgrades and migrations without the stress.

Think of an AI model upgrade like updating your database or your security software. It shouldn't be an emergency. Since Microsoft eventually retires old model versions, a "set and forget" approach will eventually cause your app to stop working.

Assigning a Model Owner for every app.

Keeping a Retirement Tracker to know when versions expire.

Sometimes you can upgrade the brain without changing the bridge, but if you change both at once, the risk of something breaking is much higher. Always test both separately to avoid "silent" errors where the code works, but the AI's answer is wrong.

Using the latest tools, you can point your code to a specific deployment alias to keep things stable.

import os

from azure.ai.projects import AIProjectClient

from azure.identity import DefaultAzureCredential

# Required in new Foundry: project endpoint (documented format)

# https://.services.ai.azure.com/api/projects/

project = AIProjectClient(

credential=DefaultAzureCredential(),

endpoint=os.environ["AZURE_AI_PROJECT_ENDPOINT"],

)

deployment_alias = os.environ["MODEL_DEPLOYMENT_NAME"] # e.g., "prod-gpt4o" or "candidate-gpt4o"

# Pin the API contract if you are using the versioned inference surface (YYYY-MM-DD).

# (If you move to the v1 API, you typically don’t need dated api_version pins.)

api_version = os.environ.get("AZURE_OPENAI_API_VERSION", "2024-10-21")

with project.get_openai_client(api_version=api_version) as client:

resp = client.chat.completions.create(

model=deployment_alias,

messages=[

{"role": "system", "content": "You are a helpful assistant."},

{"role": "user", "content": "Summarize the key risks of model upgrades."}

],

temperature=0.2

)

print(resp.choices[0].message.content)You can't manage what you can't see. Build a simple list of every AI model your company uses. Note down which region it is in, whether it’s a "standard" or "provisioned" deployment, and exactly when it is scheduled to retire.

Not every app needs the latest version on day one. We recommend a tiered approach:

Microsoft provides alerts through Azure Service Health. Models go through stages: Preview → General Availability → Legacy → Retired. Once a model hits "Legacy" status, your team should already be testing its replacement.

Don’t guess if a new model is better—prove it. Microsoft Foundry includes Evaluations that let you compare versions. You should have a "Golden Dataset"—a list of your most important questions and the perfect answers you expect.

Before you flip the switch for everyone, run a small test like this to check for safety and relevance:

import os

from azure.ai.evaluation import (

evaluate,

RelevanceEvaluator,

GroundednessEvaluator,

ContentSafetyEvaluator

)

# Model config (deployment alias, not version)

model_config = {

"azure_endpoint": os.environ["AZURE_OPENAI_ENDPOINT"],

"api_key": os.environ["AZURE_OPENAI_API_KEY"],

"azure_deployment": os.environ["MODEL_DEPLOYMENT_NAME"]

}

# Define evaluators

evaluators = {

"relevance": RelevanceEvaluator(model_config),

"groundedness": GroundednessEvaluator(model_config),

"safety": ContentSafetyEvaluator(model_config)

}

# Golden dataset (example)

dataset = [

{

"query": "List key risks in model upgrades",

"response": "Model upgrades may affect latency, output format, and safety behavior.",

"context": "Model upgrades change runtime behavior and may impact downstream systems."

},

{

"query": "Explain rollback strategy",

"response": "Use side-by-side deployments and config-based traffic routing.",

"context": "Foundry supports deployment aliases and canary routing."

}

]

# Run evaluation

results = evaluate(

data=dataset,

evaluators=evaluators

)

print(results)

By following these three steps, you can keep your business running smoothly:

Reach out to our team today to start your migration strategy.